Today, businesses compete in an increasingly mobile-centric marketplace, with over 6.5 billion smartphone subscriptions worldwide as of 2022 and several hundred million more expected in the next few years. Modern mobile testing teams need to accelerate testing to go to market faster while maintaining overall application quality. However, a key blocker for many mobile development and QA teams is the high cost, inefficiency, and complexity of maintaining an internal test infrastructure.

Many engineering and QA teams think they need to choose between real mobile devices or emulators/simulators when creating an automated mobile testing strategy. The default for mobile testing teams in large enterprises is to use real mobile devices. While this gives them more accurate test results, it is not ideal for implementing and scaling automated testing. Startups and SMBs may ignore real devices altogether as they’re perceived to be too expensive, and opt for the more convenient option— emulators and simulators. In doing so, these teams miss out on the real-world feedback that a mobile device can provide.

The most efficient and effective mobile test strategy uses the best of both worlds: incorporating emulators, simulators, and real mobile devices enables teams to test across the full spectrum of the development lifecycle, from design to post-release.

This guide discusses the strengths and weaknesses of emulators/simulators and real devices, and provides guidance on how to use them together to take your mobile app testing to the next level.

The State of Mobile Development in 2023

But first, let's discuss why having a robust mobile application testing strategy is critical to the growth and success of your business.

Sauce Labs recently commissioned Enterprise Strategy Group (ESG) to conduct an industry research survey to help us better understand the state of mobile application development and testing. ESG polled 300 developers, test engineers, SREs, and product owners at mid-sized and enterprise companies on their mobile development and testing processes and challenges. The research sheds an undeniably bright spotlight on the tremendous pressure organizations are under:

50% of survey respondents doubled the number of mobile apps they develop in the past three years.

76% of organizations plan to increase their testing capabilities in the next 12-24 months.

Two-thirds or more of companies agree they have issues with the scalability, cost, productivity, and completeness of increased testing that will help them get where they need to be.

41% of developer time is spent on testing apps, which leaves only 59% for coding applications.

65% of research respondents reported that their testing is comprised of at least 50% manual workflows and tasks.

75% of participants with highly automated testing processes say their test/QA team is seen as a competitive differentiator.

This last finding really backs up one of our core tenets at Sauce Labs: a combination of manual and automated testing is the best strategy for delivering quality applications at speed. When you deliver code that behaves exactly as it should, every time, you deliver an application experience that benefits both your customers and your company's bottom line.

The Stakes Have Never Been Higher

High-quality mobile apps are not only important revenue generators for many organizations, but they're also key to customer satisfaction. However, customer demands and the increasing complexity of testing introduce new considerations, including:

The high standards for acceptance to app stores requires more stringent development processes.

The complexity of native applications mirrors that of client/server applications with the addition of many more variables, such as the wide variety of device and OS combinations.

Testing plays an important role in ensuring apps meet today’s high standards.

Similarly, there is a key difference between the release cycles of native applications and mobile web applications. Updates for web applications can be deployed in minutes or seconds, multiple times per day. They can be automatically accessed by users and rolled back as needed. But for native applications, the release cycles are longer and more complex, and fixing a bug in production can be both costly and time-consuming. For example, an app installed on the device of an end user cannot be rolled back, which can lead to multiple versions of the apps in production. That's why testing earlier in the release cycle is critical for native apps.

Customer expectations will drive what’s next for mobile app development because the average person spends over four hours a day on mobile apps. Customers want mobile apps that have a mobile-first UX with advanced functionality. Additionally, they expect apps to be stable and free from crashes and bugs. In other words, they expect their apps to just work. Organizations that can't deliver on these expectations risk losing revenue: nearly 1 in 5 users say they won’t wait any length of time for an error to be fixed.

Evolving Market Conditions Require an Evolved Approach to Mobile Testing

The old days of testing mobile apps using in-office device carts or device labs are over. With remote/hybrid work environments becoming the norm, plus the fragmented mobile device and operating system (OS) landscape, maintaining physical devices or devices on premises can be costly, risky, and unsustainable. These challenges can negatively impact the speed and quality of your organization’s mobile app release cycles – and your company’s bottom line.

Today, organizations typically fall under one of two extremes: they either rely entirely on real devices or only on emulators and simulators for their mobile testing. However, there are drawbacks to both approaches.

Organizations that test exclusively on real devices assume they're not compromising on the quality of their tests, while other organizations prefer to use only emulators and simulators because they're faster than real devices, easy to set up, and cost less.

However, both of these extremes are a compromise. Real devices have drawbacks in terms of scalability and cost, while emulators and simulators are an improvement on real devices but are unable to deliver a real-world testing environment.

The ideal mobile testing strategy includes a mix of emulators, simulators, and real devices. This approach addresses the scalability and cost inefficiencies of using real devices while retaining the ability to test under real user conditions.

Let's dive into why this strategy offers teams the best of both worlds, and what adoption looks like in practice.

Mobile Emulators and Simulators: Faster Testing Earlier in the SDLC

The core benefits of emulators and simulators is that they allow teams to test their apps during development. But first things first: what are emulators and simulators?.

What is a mobile emulator?

A mobile emulator, as the term suggests, emulates the device software and hardware on a desktop PC, or as part of a cloud testing platform. It is a complete re-implementation of the mobile software written in a machine-level assembly language. The Android (SDK) emulator is one example.

What is a mobile simulator?

A simulator, on the other hand, delivers a replica of a phone’s user interface and does not represent its hardware. It is a partial re-implementation of the operating system written in a high-level language. The iOS simulator for Apple devices is one such example.

Emulators/simulators vs. device lab

Emulators and simulators enable parallel testing in a way that can’t be achieved with devices in a lab. Because tests on emulators and simulators are software-defined, multiple tests can be run on tens of emulators and simulators at the click of a button without having to manually prepare each emulator or simulator for tests.

Emulators and simulators are also faster to provision than real devices, as they are software-driven. Additionally, they enable parallel testing and test automation via test automation frameworks like Appium, Espresso, and XCUITest.

Where Selenium revolutionized web app testing by pioneering browser-based test automation, Appium is its counterpart for mobile app testing. Appium uses the same WebDriver API that powers Selenium and enables automation of native, hybrid, and mobile web apps. This greatly improves the speed of tests for organizations that were manually testing on real devices. Similarly, enabling test automation with native frameworks (Espresso for Android and XCUITest for iOS) provides better test reliability, speed, and flexibility for native application testing.

It’s not enough to test on emulators and simulators alone. Real devices are an important part of the mobile application quality process.

Real Mobile Devices for Real-User Feedback

Real device testing is the practice of installing the latest build of a mobile app on a real mobile device to test the app’s functionality, interactions, and integrations in real-world conditions.

Some mobile testing teams choose to eliminate testing on real devices altogether, thinking it will save them money and time. While this may speed up the testing process initially, it comes with a critical drawback: emulators and simulators can’t fully replicate device hardware. This makes it difficult to test against real-world scenarios related to the kernel code, the amount of memory on a device, the Wi-Fi chip, layout changes, and other device-specific features that can't be replicated on an emulator or simulator.

That's why real device testing is a recommended component of a comprehensive mobile app testing strategy, especially when used in combination emulators and simulators. But while real device testing offers many benefits, there are right and wrong ways to incorporate real devices into your mobile testing strategy.

Testing on local real devices cripples mobile testing

Mobile testing teams that use local devices for testing (devices located on-premises, shipped to remote developers, or centralized in an in-house device lab) soon discover that this “DIY” approach is not ideal for scaling and automating their testing. The disadvantages include not being able to fully automate tests, time spent updating operating systems or apps, and long waits to use targeted devices.

The DIY approach can result in inefficient launches that are focused on functionality and user interface but ignore important factors like stability, networks, and laggy client performance. Similarly, the lack of user feedback pre-production creates a bottleneck of issues to troubleshoot which could delay roll-out. And post-launch, mobile testing teams have to rely heavily on users to act as their debugging layer so they can fix issues, which can quickly become very costly while also frustrating customers.

Research shows that 25% of users have written a negative review of a company after a bad experience with a mobile app. Low-quality releases are reflected in poor ratings that flood the app listing, which can result in fewer new installs, lower daily average users (DAUs), and eventually lost revenue.

Cloud-based real device testing offers many benefits

Organizations using in-house or on-premises test infrastructure typically aim to test on the devices that represent a majority of their user base. This could mean maintaining anywhere from 20-50 devices. Even if this is manageable at first, outdated devices need to be constantly replaced with newer devices. Further, maintaining all these devices takes valuable focus away from core testing activities.

A real device cloud can address the issues of in-house test infrastructure by providing on-demand access to real iOS, Android, and other mobile devices (smartphones and tablets) for testing. Like real devices on premises, cloud-based real devices run tests on actual phone hardware and software. The key difference is the real devices are hosted in a cloud-based test infrastructure and are accessed remotely by sending test scripts to the devices over the web. These scripts are executed on the devices, and test results are sent back in the form of detailed logs, error reports, screenshots, and recorded videos. And there’s no upkeep because the device vendor is responsible for maintaining them and providing the latest devices once publicly launched.

Faster feedback loops are critical for more control over the quality of software and accomplishing testing goals. A device lab doesn’t have adequate tools for monitoring tasks and troubleshooting is often done manually by a human running each test to replicate the error and find the root cause. With devices in the cloud, mobile testing teams can use robust monitoring tools that track and report on every step of the test, and relay it back for analysis.

Real devices offer diagnostics and signals that instill a higher degree of confidence in an app’s performance in real-world scenarios. Testing for real user conditions (network simulation, localization, GPS, etc.) helps mobile testing teams accelerate the resolution of issues and release of mobile apps by enabling faster determination of root causes of failures in the pipeline and errors in code.

A real device cloud is the next best thing to holding a device in your hand, offering the ability to access and interact virtually with the devices through the cloud, without any of the operational burden of maintaining them in-house. This gives mobile testing teams better flexibility, scalability, visibility, and cost efficiency compared to using devices in a lab.

Real Devices | Emulators/Simulators | |

Easy to provision | X | |

Easy to scale | X | |

Facilitates automation | X | |

Detect hardware failures | X | |

Advanced UI testing | X | |

Easy to maintain | X | |

Cost-efficient | X |

When to Use Real Devices vs. Emulators/Simulators for Mobile App Testing

Once emulators and simulators have been added to a mobile testing strategy, there may still be uncertainty about which tests to run there, and which tests to run on real devices. The answer would vary depending on the testing goals of each organization, but certain guiding principles can help.

Real Devices | Emulators/Simulators | |

Functional testing for large integration builds | X | |

UI layout testing | X | X |

Panel/compatibility testing | X | |

System testing | X | |

Display testing (pixels, resolutions) | X | |

Replicate issues to match exact model | X | |

Camera mocking | X | |

UX testing | X | |

Push notifications | X | |

Natural gestures (pinch, zoom, scroll) | X |

Three Approaches for Testing Mobile Applications with Emulators, Simulators, and Real Devices

It's not simple enough for us to tell you to integrate both real devices and emulators/simulators in your mobile testing strategy. There are also several different ways you can approach this integration depending on where you are in your mobile testing journey and the size of your business. Here are three testing approaches to consider.

1. The mobile test automation pyramid approach

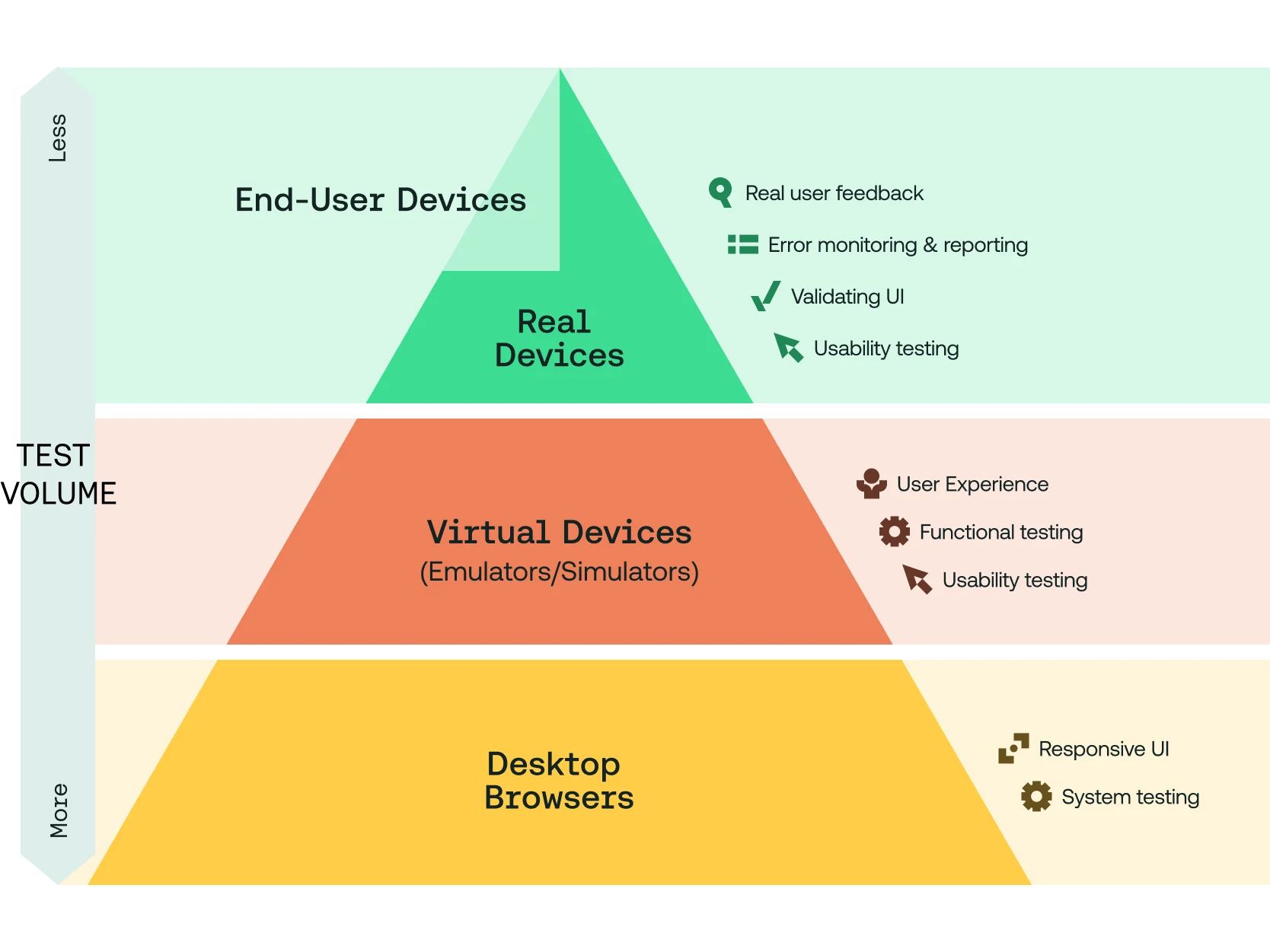

Kwo Ding's mobile test pyramid–an adaptation of Mike Cohn’s original test pyramid–includes three levels of mobile application testing: real devices, mobile simulators and emulators, and desktop browsers (using mobile simulation). We’ve reimagined Ding’s test pyramid to account for the different use cases, personas, and beta testing that today’s mobile engineering teams need for efficient and comprehensive testing.

The mobile test automation pyramid 2.0 is designed to guide mobile testing teams on what to test for each type of mobile app at each stage of the SDLC. This updated approach considers not only the specific testing needs of different personas, but also scaling mobile test automation and the mobile use cases developers are actually testing for.

The mobile test automation pyramid 2.0 still includes the same layers as the original version, but adds a bonus layer at the top for running mobile beta tests on the devices of real users.

2. The hybrid approach for a quick start

A hybrid approach uses emulators, simulators, and real devices according to their unique strengths and weaknesses.

One way to decide where to run mobile tests is based on immediate testing needs. For example, if an app is in the alpha stage, pixel-perfect UI testing isn’t necessary. If mobile testing teams want to run multiple low-level tests in parallel, emulators are the best bet. On the other hand, if the goal is a revamp of the app user interface, and the look and feel and exact color shades matter, it may be best to lean towards real devices.

This hybrid approach of picking and choosing where to run which tests is a great way to start small and not be overwhelmed by all the changes. The key is to start somewhere and build upon the starting point. However, as testing matures, a more structured way of using emulators, simulators, and real devices may be necessary.

3. The T-shaped approach for the mature QA team

The T-shaped approach is a popular analogy in recruiting that’s endorsed by Tim Brown, CEO, IDEO. According to this metaphor, candidates with T-shaped skills are those with working knowledge of a wide range of skills (the horizontal bar in the ‘T’) and specialize in one of those skills (the vertical bar). This model can be useful when deciding where to run test scripts.

The T-shaped approach to testing is a great way to leverage emulators, simulators, and real devices according to their strengths. Emulators and simulators are used for the majority of tests across the pipeline to gain wide testing coverage. Meanwhile, real devices are used for specific tests that require in-depth testing and to gain a higher degree of confidence that an app will perform as expected in real-world scenarios.

Following the Continuous Integration (CI) model of development, the goal is to iterate fast and frequently, all through the pipeline, but more so in the initial stages. Emulators and simulators are well suited for this because they are cheaper, easier to provision, scale, and manage than real devices.

In the later stages, when device-specific features are being tested, real devices trump emulators and simulators. Since most of the basic tests are completed with emulators, it’s possible to have much fewer iterations using real devices. Because of the real-world feedback they deliver, the use of real devices can be treated like system testing prior to release.

The T-shaped approach allows automation of an entire testing matrix across emulators, simulators, and real devices, providing real-world results while optimizing costs. This is a winning combination to launch an app successfully, and drive accelerated adoption from its user base.

Conclusion

Emulators and simulators are complementary to real devices, but they can’t deliver the real-world environment that a device can deliver for mobile app testing. When used together in an automated testing environment, real devices, emulators, and simulators enable modern mobile development and help testing teams get the greatest impact out of their mobile app testing. Any mobile testing team that takes the quality of their app seriously should consider real devices in the cloud as a key component of their mobile testing strategy.

A real device cloud provides instant and secure access to real devices anytime, easier scalability, expanded device coverage, and no responsibility for maintenance. ESG's research shows that using a real device cloud can accelerate mobile app testing with lower error rates while providing faster feedback loops and reducing testing costs. For companies unable or unwilling to take on the operational burden, costs, and security risks of hosting physical or on-premise real devices, a cloud-based solution like the Sauce Real Device Cloud can take your mobile testing strategy to the next level.

Related resources

Jump to content

The State of Mobile Testing in 2023

Evolving Your Approach to Mobile Testing

Mobile Emulators and Simulators

What is an emulator?

What is a simulator?

Emulators/simulators vs. device lab

Real Devices

Real Devices and Emulators/Simulators Compared

When to Use Real Devices vs. Emulators/Simulators

The Mobile Test Automation Pyramid Approach

The Hybrid Testing Approach

The T-Shaped Approach