Blog

Using Data to Improve Test Efficiency

Update: Test Analytics is now Sauce Insights.

Introducing our newest Insights feature: Failure Analysis. Using sophisticated machine learning that works with test pass/fail data, Failure Analysis surfaces the most common test errors, prioritizes them based on how frequently they are occurring, and gives you insight into where you can focus efforts to improve your pass rates.

Testing is Getting Noisy

If you’re reading this, you are probably already aware that the spotlight on testing is brighter than ever before. The research is clear that customer experience, and therefore application quality, is paramount to the success of a digital business. Here are just a few stats:

According to McKinsey & Company, 25% of customers will abandon a brand after just one bad experience.

The Harvard Business Review reported that customers who had the best past experience spend 140% more than customers who had the poorest past experience.

Qualtrics found that 41% of customers either decrease spending or stop spending altogether after a bad experience.

Now more than ever, leadership wants to understand how their quality strategy is mitigating the risk of a poor user experience, and helping build digital confidence throughout their organization. “Automate more!” they say confidently, knowing that manual testing creates bottlenecks to releasing new features to customers. But as test automation begins to scale, new questions arise. Mainly, how do we know if our testing efforts are positively impacting the business? Is this working?

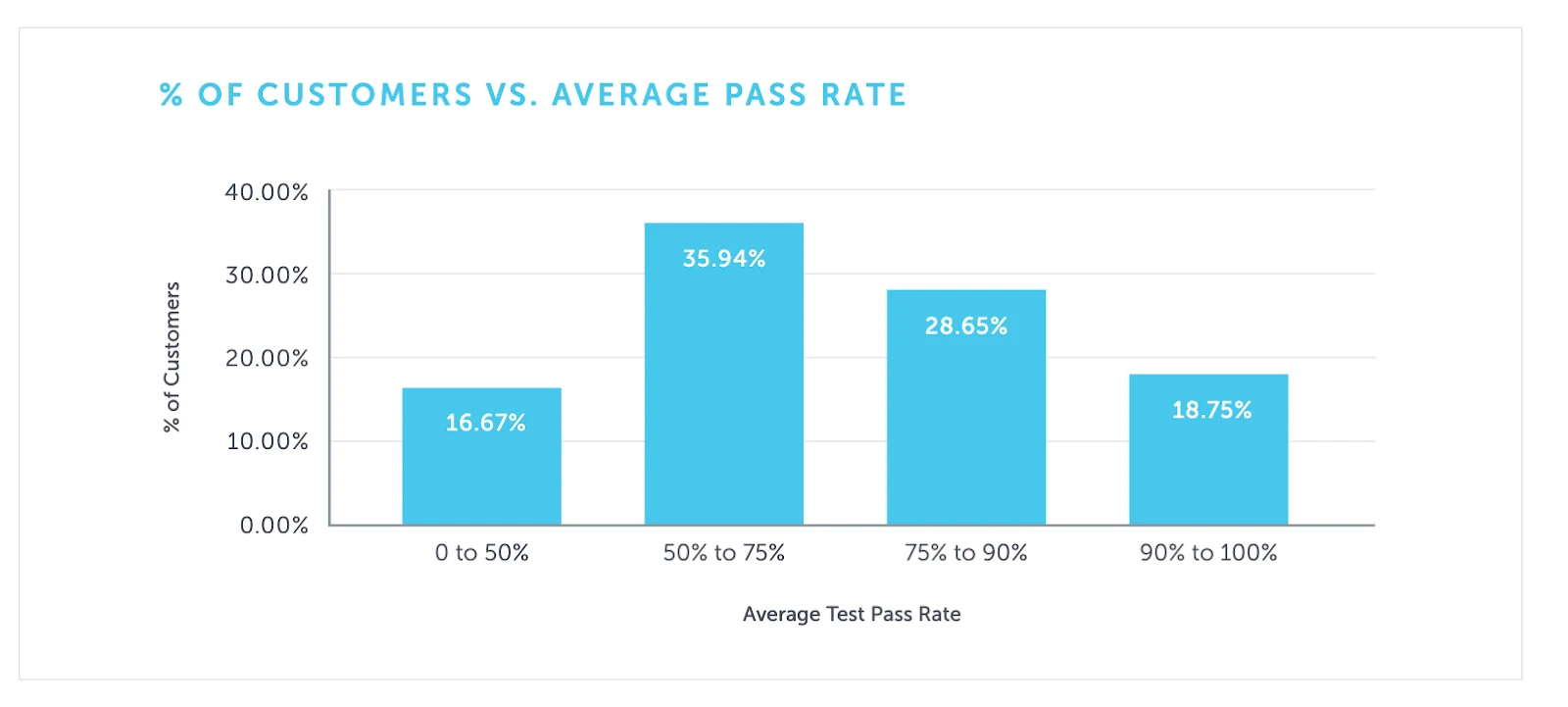

In the Sauce Labs 2019 Continuous Testing Benchmark, it was reported that 80% of our users have a test pass rate of less than 90% (please see above image). This means that for most users, at least 10% of their tests need to be investigated. And for customers that run tens, or even hundreds of thousands of tests a day, this is not an insignificant subset. Leadership sees failure rates, and wants to understand why these failures are happening and how quickly they can be fixed. Developers want to keep writing new features and innovating, not chasing down and fixing problem tests in what seems to be a never-ending project. It’s a constant balancing act, and a common struggle for teams.

To help ease this burden, data insights can help proactively measure testing efficacy, and can even uncover where failures occur. But that’s where most of these measurements end— they tell you where the problems are, but not how to fix them. To really make a difference:

Developers and QA need a smart way to understand why tests are failing, and insight into how often those types of failures are occurring across the test suite. This way, they can focus on the most pervasive issues, and get the most “bang for their buck” when fixing tests.

Management wants a single view that shows the ongoing health of their quality strategy, and how it justifies their spend (on tools, people, and automation in general), and builds confidence that testing is mitigating the risk of poor user experience.

What is Sauce Doing About This?

Our team has been interviewing customers of all sizes, and across industries, to understand how our Continuous Testing Cloud can help make them better testers. One of the most commonly discussed topics with our customers is the concept of failure analysis. How can we leverage test data with technology to offer insight into where the most pervasive issues are in a test suite, and help make sense of all of the noise test failures can create?

To that end, we are happy to announce the availability of our newest Insights feature: Failure Analysis. Using sophisticated machine learning that works with test pass/fail data, Failure Analysis surfaces the most common test errors, prioritizes them based on how frequently they are occurring, and gives you insight into where you can focus efforts to improve your pass rates. This improves developer efficiency, as they are fixing the most pervasive issues more quickly. And leadership can see the positive impact of testing efforts, and rest assured that their investment in automation is directly influencing user experience.

Trying Failure Analysis

Our team is excited to offer Failure Analysis to our customers and get your feedback on this new and evolving feature. Read below to see if you qualify for early access to Failure Analysis:

Failure Analysis currently works with any Selenium or Appium tests. It does not work for XCUITest or Espresso at this time.

For now, this feature is available to our Enterprise contract customers. Anyone on our online subscription plans, free trial, or Open Sauce will have access at a later date.

To begin using Failure Analysis, you must configure your tests to send pass/fail data to Sauce Labs. This feature uses machine learning to analyze pass/fail data and present common failure patterns. To enable this, please reference this Setting Test Status to Pass or Fail in our documentation.

If you’re interested in trying out Failure Analysis, please send an email to failureanalysis@saucelabs.com, and our team will respond with more information on getting started.