Welcome to our series about non-functional testing! Here, we’ll explain the differences and overlaps between several types of non-functional testing, and how it intersects with the functional testing world.

Today we'll talk about Performance Testing, both broadly and in detail. It encompasses far more than you could put into a single article – it’s an entire career path – but as QA professionals, we should understand the impact and importance of Performance Testing for a business. Hopefully, this article will be a bridge to better communication and understanding with these critical teams.

What Is Performance Testing?

Performance testing is a testing process for system stability and responsiveness under given workloads. The goal of performance testing is to find and eliminate anything that might cause performance problems by testing scenarios such as:

Application and command response times

The velocity of data transfer

Stability under various workloads

Concurrent user volume

Memory consumption

Network bandwidth usage

Workload efficiency

Functional Testing vs. Performance Testing

Functional testing means going through user flows to ensure that the business logic of any workflow meets requirements and provides a successful experience to the user. It’s important to note the phrase “the user”( i.e., one user). Functional testing usually involves one user at a time: filling out forms, clicking buttons, and simulating a real user on your site. Multiple users (or automated processes) can do this testing in parallel, but typically there won’t be enough simultaneous users to cause the site any particular stress.

To do functional testing, you usually want to go through positive and negative test cases, using varying data sets, ensuring that each path through the workflow is a success.

In comparison, performance testing uses simplified versions of these workflows, but with enough users working in parallel to test the system’s infrastructure, not it’s business logic.

When a functional test is successful, you’ll have confidence that a given user can accomplish their task.

When a performance test is successful, you’ll have confidence that the site will work successfully for many users at the same time.

Types of Performance Testing

This isn’t a complete list, but these encompass 80-90% of the work:

Stress/Capacity Testing - Testing the upper limits of a system’s capacity to handle the load.

Load Testing - Understanding the behavior and changes a system experiences at different levels of traffic.

Soak/Endurance Testing - Measuring a system’s performance over time with predictable and unpredictable patterns of usage.

Volume Testing - Subjecting a system to huge volumes of data, to test databases and APIs more efficiently.

Scalability Testing - Testing a system’s ability to scale with increased traffic, including the ability to expand between cloud regions.

Spike Testing - Observing a system’s behavior when large amounts of traffic occur at the same time. Black Friday traffic is one example. Taylor Swift or Beyoncé announcing a tour would be another.

Breakpoint Testing - Similar to stress testing, breakpoint testing helps determine the maximum capacity levels where systems will perform to minimal requirements. Also called capacity testing, incremental loads are applied until things “break.”

Internet Testing- Internet testing helps determine end-user performance over various internet speeds and connections.

Isolation Testing- Isolation testing breaks down the system into different modules for testing. Most commonly, this is done when performance issues or bugs are challenging to find. Isolating various modules helps testers narrow the search until bugs are identified for resolution.

Sub-sub-genres

Availability and Resilience Testing - constant monitoring, sometimes called “heartbeat” monitoring, to alert and summon people whenever a given system reports an outage.

Failover Testing - to ensure that if you have an outage in one region or server cluster, your system can find its way to another, ensuring minimal or zero downtime. The ability to turn blackouts into brownouts is a major milestone for any software team.

Disaster Recovery - ensuring that when your system is down, you don’t lose valuable data, and you can come back online quickly. Just be sure to actually test the recovery piece.

Not every organization needs every kind of performance testing. I’ll say again that it’s extremely important to understand your business, your users, and your product architecture. There is no one-size-fits-all approach. If you run a retirement community management platform, your traffic patterns, demographics, and technology choices will be radically different from a platform like TikTok.

Common Performance Issues

These are the most common performance issues, but not the only ones.

Slow Response Times

Slow response times can be due to bad code, database issues, or server limitations. You can try several things to fix this issue, like optimizing code, improving database indexing, and scaling server resources. Consider caching frequently accessed data to reduce load times.

High Latency

Latency issues can come from slow networks, inefficient data processing, or underperforming servers. To fix these, you could possibly upgrade your network, streamline your data handling, and boost server performance. Also, using CDNs can help cut down latency for users in different locations.

System Crashes

Crashes may result from exceeding system limits, memory leaks, or unhandled exceptions. Implement robust error handling, monitor memory usage, and conduct stress testing to identify potential limits. Always ensure systems have failover mechanisms in place to handle unexpected load increases.

Bottlenecks

Bottlenecks can pop up in your code, database queries, or network. Use profiling tools to spot and fix these issues. Focus on speeding up key code sections, simplifying database queries, and boosting network performance.

The Finer Points of Performance Testing

We hear about the same development trends across the industry:

From on-premise to cloud-based servers

From monolithic applications to microservices

From monthly (or quarterly–or yearly) to weekly (or hourly–or per commit) releases

From proprietary software to open source

From custom-built servers to fleet-managed containers

But as omnipresent as these movements are, there are as many different ways to put a tech stack together as there are snarky comments on Stack Overflow. Even though websites are fundamentally just HTTP and API calls through a browser, every architectural decision along the way will influence how an application performs at scale.

I said that performance testing seeks to test infrastructure, not business logic. That’s broadly true, but it implies that anyone who knows performance testing can work on any software. In fact, there are cases where the fundamental construction of the software, including the business logic, can impact performance.

These are somewhat rare examples, and the list isn’t complete, but they’re worth considering:

A repository of images uploaded by users or affiliates (e.g., product photos or profile pictures). Performance testing could reveal opportunities (at scale) to compress images or ensure redirects are correct.

A complicated workflow that is only used seasonally, like tax software or flower delivery. If different teams work on these flows and don’t keep up with performance testing, the stress on these workflows could bleed over into other areas of the software.

Another pointer to consider: an outage doesn’t have to mean that all your pages are down. At one major eCommerce site, our practice was to monetize the 404 “page not found” errors. If part of the site had an error, we designed pages that made it clear that the user had encountered something unexpected, but we still provided value to that user.

404 pages don’t have to be ugly!

We had a “red button” static HTML site that would deploy if an outage was ever bad enough. It was hosted in a different cloud environment, was always available, and still provided revenue for the company. It wasn’t as good as not needing to use the red button, but it mitigated our disasters, and didn’t ruin the user experience.

Don’t Be a Silo

It’s as critical to understand how these different kinds of performance tests work with each other, as it is to understand how they work with other departments and other testing teams. For example, if your company is a major eCommerce site or a large consumer bank, you’ll need to make sure that your user analytics events figure into your performance calculations, otherwise you’ll be opening yourself up to risks. When these kinds of systems fail, your ability to understand what’s really going on with your users diminishes greatly.

Many organizations I’ve worked in have a separate team for performance testing. They rarely talk to people outside of SRE, and they don’t have a close relationship with the business. Don’t be content with that scenario: seek out the stakeholders, and convince them that you’re there to help them manage risk. They will listen.

How To Run Performance Tests

To put it simply, begin by clearly defining your test objectives and developing realistic scenarios that reflect how users interact with your system. Set up these scenarios to simulate different load conditions, such as varying numbers of simultaneous users or peak traffic times. Next, use performance testing tools to execute these scenarios and collect data on key performance metrics like response times, throughput, and resource utilization.

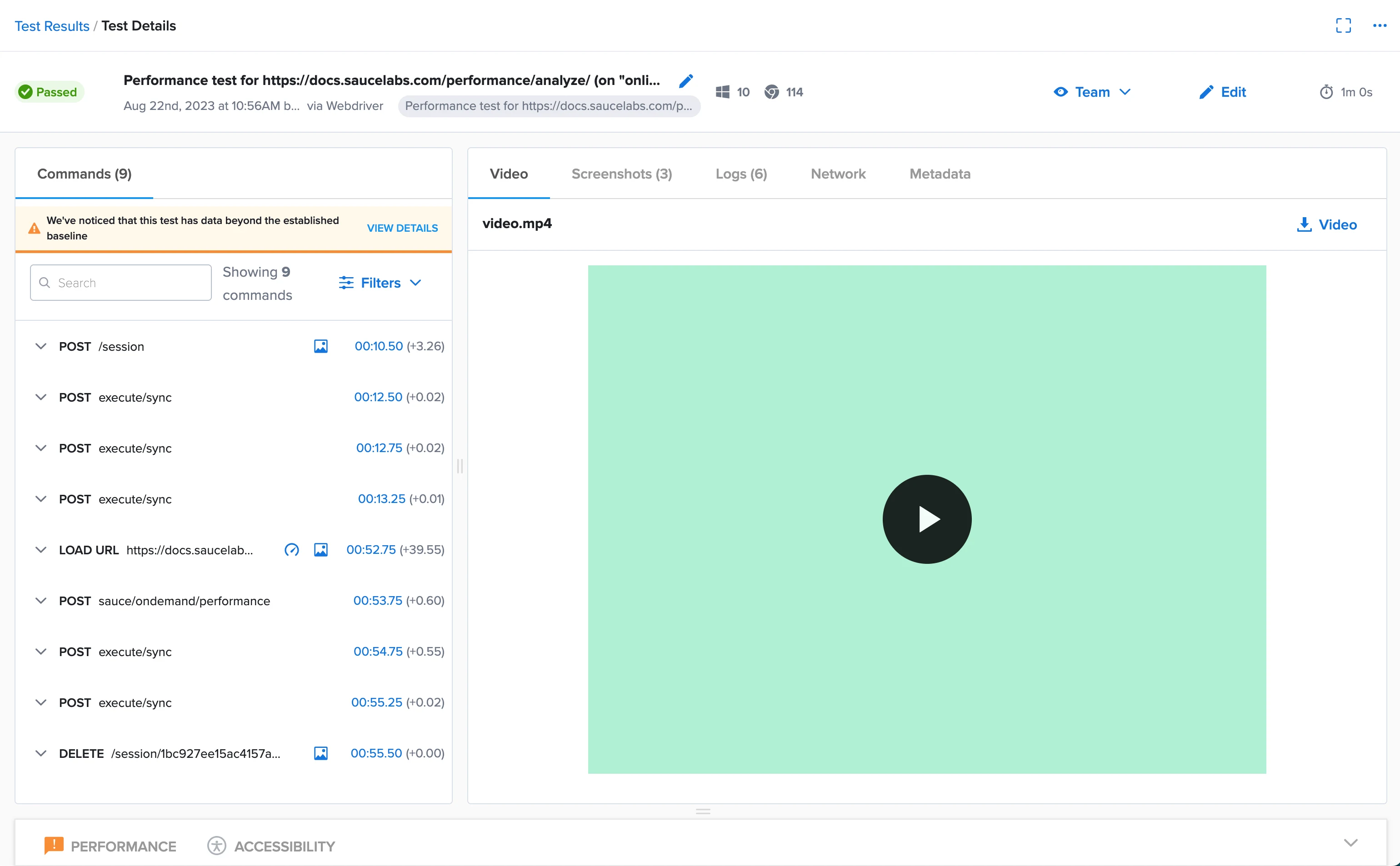

You can use a platform like Sauce Labs simplifies the process with a cloud-based environment where you can perform performance tests across a wide range of devices and configurations.

Conclusion

In my previous article about how non-functional testing could have saved a major ticketing site a lot of trouble, I stated that the issues around a problem of that magnitude aren’t limited to just performance testing. To properly anticipate the impact of 14 million users accessing your website when you only expected 2 million, you also need to consider security and chaos testing.

Why? Because this discipline is simply no longer isolated to “whether or not the machine can handle X connections”. You need to understand the nature of the connections as well as the nature of your system when it experiences a partial or full-outage.

Please stay with us on this journey exploring different areas of non-functional testing, including a upcoming look at security and chaos testing.

To get started with non-functional testing, sign up for a free trial of Sauce Labs.